What’s the Magic Here?

The magic of the ChatSF application is that we provide GPT with metadata, not data, and it figures out how to retrieve the data it needs. Let me illustrate why that’s magical. Here’s a screen shot from a demo of Salesforce’s Prompt Editor given at Dreamforce:

The section in red is the prompt, and you can see that, for example, it is pulling in the FirstName field from the contact record. So the prompt that is sent to GPT (for that specific line of the prompt) is “The customer’s name is Nya”. GPT itself has no idea where that came from. Is Nya a contact? A user? A (heaven forbid!) person account? Not that it matters that much, because if it did know, it couldn’t do much with it.

In ChatSF, the code doesn’t spoon feed GPT all the data we think GPT needs1. How could we? Do we know what data is needed? Nope, we don’t. The prompt above can, because its goal is to elicit a specific, defined-in-advance response. But in a fluid chat environment, the user is unconstrained. We don’t know what they’re going to ask for. A list of contacts sorted by middle name? Why not?

To be fair to Salesforce, this is not a criticism of the prompt editor … (see footnote)2

Interested in piloting a project like this, but with more capability? Ping the consultants at Owls Head to find out how! They don’t bite … but they give a hoot!3

In ChatSF, therefore, we don’t try to anticipate what is needed by GPT to answer the user’s question. Because we can’t.

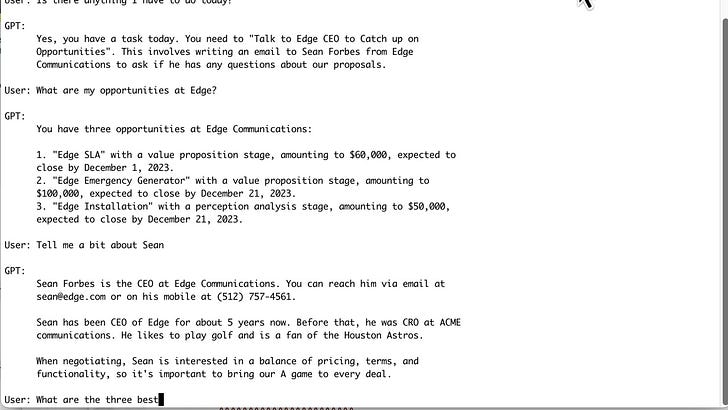

So when the user writes:

Write an email that I can send to Sean asking him to join me for a round of golf to discuss our deal. Be sure to list each of the open opportunities at Edge.GPT will figure out what information it doesn’t already know, query Salesforce for it, and then write the email:

Nobody has pre-built a prompt for writing this email, so nobody decided in advance what data GPT should or would have access to. The user is, in essence, writing a prompt at runtime and GPT decides how to ground the prompt.

Subscript promptly so you don’t miss an update!

How does GPT know what data to get? We’ve told it, beforehand, what tables and columns exist that it can access. That is the magic of metadata. And while most magic tricks seem a lot less magical when you know how they work, it is also true that performing the magic yourself is almost always harder than it looks.4

So, How do we Provide the Metadata?

Take a look at the source file salesforce.py. In the routine __init__, you’ll see a bunch of lines that look like:

self.table2columns['OpportunityContactRole'] = ['ContactId', 'Id', 'IsPrimary', 'OpportunityId', 'Role']This lists the specific tables and columns (SObjects and fields) we want to tell GPT about. In addition, there’s also a bunch of lines that look like:

self.foreign_keys = [

('Account.PrimaryContactId__c','Contact.Id'), ('Account.ParentId','Account.Id'), ...This tells GPT how the tables are related to each other.5

From this information, GPT is able to generate SQL Statements that reference the correct entities in Salesforce.

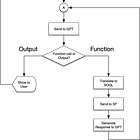

This information all gets assembled in a couple of steps:

The Foreign Key definitions and other hints get put together in

__init__.The method

get_schematranslates the table definitions into a prompt and adds in the keys and hint information.The prompt is then incorporated into the function definition provided to GPT in the routine

get_functions_parameter.

The goal is to have as compact a definition as possible that (a) gives GPT enough information to enable it to make the right queries at the right time but (b) doesn’t let it generate so complicated of a SELECT statement that it cannot be converted to SOQL.

Is it perfect? No, but it works well enough to be useful.

The rest of salesforce.py is responsible for actually executing queries that have been received from GPT. But it does not actually transform the SQL into SOQL: that’s the responsibility of the aptly named sql2soql.py.6

The Rain in Spain Falls Mainly on the Plain

Relational databases speak SQL. Salesforce has a custom dialect of that called SOQL. Sometimes, the two are exactly the same. Sometimes they are not. Here’s a couple of examples where they are not:

SQL: SELECT * FROM Task

SOQL: SELECT FIELDS(ALL) FROM TaskSQL: SELECT Id, Name FROM Account WHERE LastModifiedDate > '2023-01-01'

SOQL: SELECT Id, Name FROM Account WHERE LastModifiedDate > 2023-01-01Unfortunately, the more useful the SELECT statement, the more likely it is to be incompatible with SOQL.

So we have to find a way to translate SQL into SOQL. Doing that involves a large number of trade-offs in terms of cost and benefit. The perfect approach would be to completely parse the SQL statement, figure out the execution path, and then generate optimized SOQL to do that. But that perfect is a massive undertaking, not suitable for our needs here. A less perfect, but workable, approach is to look for specific problems in SQL and fix them. This is a heuristic approach and, while things might slip through from time to time (requiring writing a new rule to catch them), I think it’s the best approach for a project like this.

The file sql2soql.py contains one function (plus a bunch of testing code) that translates SQL into SOQL. It does this in the following steps:

Break apart the SELECT statement into its components (list of fields to retrieve, where clause, etc.) to make it easier to write the rules.

Apply a succession of rules to find and translate incompatible syntax from SQL to SOQL.

Sneak in some “enrichment” of the result set in anticipation of future needs.

Reassemble the SELECT statement in SOQL.

It’s driven with a ton of regular expressions. Sure, nobody likes writing them as they’re cryptic and finicky.7 But they’re the right tool.

Here’s an example:

where = re.sub(r"<=\s*LAST_DAY\(TODAY(\(\))?\)", "< NEXT_MONTH", where)In this example, we’re looking for cases where GPT has written:

<= LAST_DAY(TODAY())in a where clause. SOQL doesn’t support that, so it gets transformed into:

< NEXT_MONTHwhich achieves the same effect and is valid SOQL.

In terms of enrichment, one of the things it does is to add Id fields into selects when they are missing. It does this because follow-up questions often will require referencing previously fetched data and having the Ids saves time and effort by avoiding having to refetch them. The code looks for any field that ends in Id (that it knows about) and adds it to the list of fields retrieved. You bring back a bit of data that’s not asked for, but are able to have a better response time down the road.

For example, if the user asks “what are my accounts” and then asks for a list of opportunities at one of the accounts, GPT already has the ID for that account (from the previous question) to use in the Select statement.

Final Thoughts

The key take-away: give GPT a fish, and it can answer a question. Teach GPT to fish, and it can answer many questions, including ones you never thought of.

Next week we’ll look at how the calls into GPT are managed in this ChatBot, including how queries are generated and managed.

Resources

Previous articles in the series:

The code repository:

https://github.com/cmcguinness/ChatSF

It does force feed it information about who the user is and what the current time is, as the first is something that’s hard to figure out with a SELECT and the second is changing …second by second

To be fair to Salesforce, their prompt editor is perfectly designed for the task it solves, and they also have a mechanism called Skills that will allow you to define resources available to GPT for more open ended conversations. I’m just trying to illustrate the differences between a canned prompt that tries to steer the results and a metadata-driven one that lets GPT decide how best to solve a problem.

Puns not authorized by Owls Head.

True magic is not the “trick” but in performing the trick well.

You are, of course, free to play with these to tune the chatbot to whatever purpose you might have. Limiting the amount of metadata is a good thing because OpenAI charges by the size of input and the size of output, and if you always pass in a large schema every call is going to be more expensive and slower.

I did try to get GPT to generate SOQL directly, but I found that it still generated SQL. But it’s an avenue worth

This is a life saver for developing regular expressions: https://krillapps.com/patterns/